Copilot Agent - My Experimentation and Learnings

Over the past few weeks, I've spent a lot of time experimenting with Microsoft Copilot Studio — trying to build AI agents that can understand my instructions and help me retrieve specific files and share them via chosen medium and to the selected recipients, a simple use case but something that I felt could save me a lot of trouble of finding files in the multiple folders and sub-folders in my OneDrive. What started as a curiosity kept me glued to my laptop, with each iteration either frustrating me or boosting my confidence.

After a lot of to and fro, the overall structure for my agent looked something like this:

- Purpose

- Behavior

- File Search Logic

- Email Logic

- Follow up Flow

- Technical Setup

- Error Handling

Ask and thou shall get

I asked the copilot to maintain a friendly, professional, and approachable tone and avoid technical jargons, to respond promptly and follow up if clarifications were needed. I noticed that when the Copilot replied in a simple, friendly way — saying things like “Let me check that for you” or “Here’s what I found” — people were more patient and responded better. When it sounded too robotic or formal, or kept confirming the user input, the users lost interest quickly (No one really has that much time or patience). Designing the tone to be approachable made a big difference in how the AI was perceived.

One of the first things I noticed was how important context is. If you don't tell Copilot where to focus or how to restrict itself, it either forgets what the user asked earlier or brings in random information. For example, when I started, and did not give proper instructions (like look in my OneDrive), it started giving results from General Web and SharePoint too. The fix was simple — I added a system instruction saying, “Only search files in my OneDrive, not in any other source.” Suddenly, the answers became much more accurate.

Breaking the complex tasks into smaller and simpler asks

Another big learning was about structure. Initially, I tried building one large flow that handled everything — user queries, file searches, confirmations, follow-ups — all in one place. It quickly got messy. Breaking the logic into smaller parts worked far better. I created step-wise instructions, with titles and with branching (like when you build flows or the ones you create in power automate), it looked neater, more structured and easy for me to spot the instructions that I wanted to tweak.

Integration Challenges

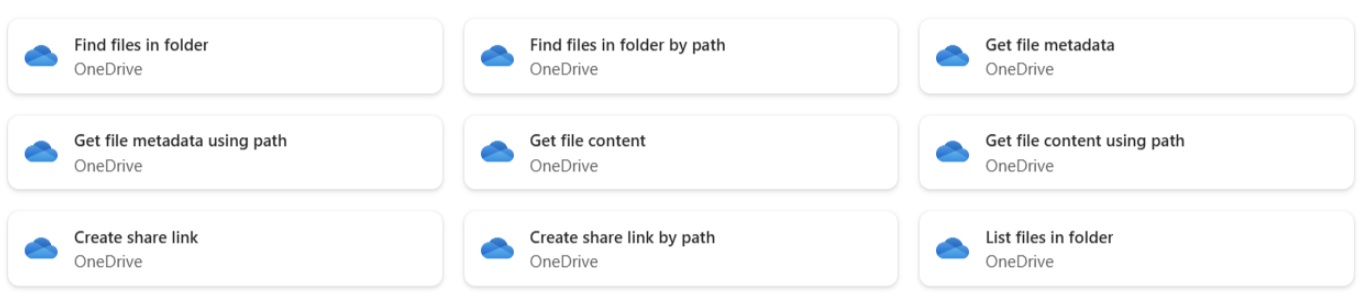

Then came integration challenges. Connecting to tools like OneDrive sounds easy. You go to the Tools section and add the desired tool (in this case, the OneDrive Connector). However, in my case, even after authentication, the agent kept giving an error “Access denied” even though the connection was valid. Well, I tried connecting to different OneDrive options available (Find a File, Create share link, List File etc. ) and eventually gave up (If the connectors work for you - it's the first thing that you should do).

What I did next was give structured prompts in the Instructions sections for the connection. I made clear that I only want the agent to look in my One Drive and not any other resources. I ensured that the web search was disabled. It worked! So, I was able to connect and retrieve files from my OneDrive without any connectors. This approach may not work in every case, but I am still exploring it.

Controling the flow

The next challenge was when the agent started throwing multiple questions at me in one-go and as soon as you answered the first one, it went to the next steps, omitting the rest. This meant my entire flow going haywire. I, then, instructed it to “Ask one question at a time and confirm before moving to the next step,”. The agent suddenly became much easier to use and the flow worked better.

Final Thoughts

Here are my two cents - I learned that fine-tuning Copilot prompts isn't about writing perfect code — it's about building clear logic and communicating well with both the AI and the user. The best Copilots aren't the most complex ones; they're the ones that understand what you mean, not just what you say.

So go ahead, blend structure with simplicity — define the goal, test often, and make it human. When those three things come together, AI feels less like a tool and more like a real teammate.